“Any sufficiently advanced technology is indistinguishable from magic”.

So said the late great science fiction author Arthur C. Clark. Technology is magical. When it works. In just the last decade technology has accelerated such significant transformations in the fields of education, commerce, healthcare and connecting communities that most discussions around the fear surrounding job losses. In some cases, that fear is well placed, but the concept of technology “changing” people’s jobs into something new and uncertain is not a new argument.

Whether you look at how machines are automating processes today, or how the mainframes and ATMs of the 1960’s revolutionised the world but initially terrified the programmers and bank clerks who were fearful of losing their jobs (They didn’t of course, they just worked differently). You could go back even further to Henry Ford’s production lines for the Model T when car manufacture was first automated or even to Josiah Wedgwood’s Staffordshire-based ceramic production line back of the early 1800’s (probably the first example of industrial automation).

Today, it’s easy to be amazed by technology when you see an AI create highlights for an event like Wimbledon without any human involvement or how AI can help to clean up the air in Beijing using complex weather models and IoT sensors. It is exciting, but humans have always viewed technological progress with equal amounts of enthusiasm and anxiety and rightly so.

Looking at how todays technologies are helping with today’s efforts to combat climate change are no different. There are two sides to every coin of course, but until recently, the dark side of this particular coin was relatively unknown. As we get increasingly skeptical about whether or not these magical forces are actually doing more harm than good, three researchers from MIT have been exploring potential energy and policy considerations for deep learning in NLP (natural language processing). This is the part of the world which I work in that covers systems like IBM’s Watson and the vast processing power that is helping to support many of the world’s biggest banks, retailers, energy providers, airlines and healthcare companies. I sometimes describe Watson as like having a really smart best friend who can help you to make decisions faster, by having the capacity to read up to 1 million books a second.

I spend a lot of my time on stage explaining how AI technologies like Watson works and what they are capable of. For more on that you can listen to one of my lectures here, but suffice to say that when you have access to a system which is able to process over 10 million records a second and make instant decisions about the next best action, it generates a lot of interest and all kinds of interesting possibilities for professionals in every industry. For bite-sized chunks of specific use cases you can have a look at this attractive little site, but you get the idea. NLP engines are powerful and while the case studies around AI and deep learning show some impressive evidence of how they are improving people’s lives, to what cost? Well those researchers at MIT, Emma Strubell, Ananya Ganesh and Andrew McCallum have been giving it some thought and here’s what they found.

Deep learning has a terrible carbon footprint.

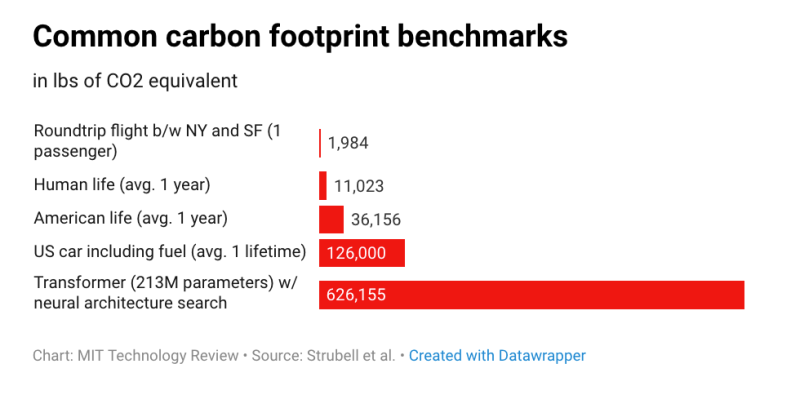

In fact in some cases they are so bad that training a single AI model can emit as much carbon as five cars in their lifetimes. In this new paper, those researchers performed a life cycle assessment for training several common large AI models. They found that the process can emit more than 626,000 pounds of carbon dioxide equivalent—nearly five times the lifetime emissions of the average American car (and that includes manufacture of the car itself).

According to Karen Hao who wrote a great piece on this for MIT’s Tech Review stated that this is something that AI researches have suspected for a long time. She also made a keen observation that the subjects of ‘big data’ and artificial intelligence are often compared to the oil industry, because once mined and refined, data, like oil, can be a highly lucrative commodity. But now it seems the metaphor may extend even further. Like its fossil-fuel counterpart, the process of deep learning has an outsize environmental impact.

The Carbon Footprint of Natural-Language Processing

The paper specifically examines the model training process for natural-language processing (NLP), the subfield of AI that focuses on teaching machines to handle human language. In the last two years, the NLP community has reached several noteworthy performance milestones in machine translation, sentence completion, and other standard benchmarking tasks. OpenAI’s infamous GPT-2 model, as one example, excelled at writing convincing fake news articles.

But such advances have required training ever larger models on sprawling data sets of sentences scraped from the internet. The approach is computationally expensive—and highly energy intensive.

The researchers looked at four models in the field that have been responsible for the biggest leaps in performance: the Transformer, ELMo, BERT, and GPT-2. They trained each on a single GPU for up to a day to measure its power draw. They then used the number of training hours listed in the model’s original papers to calculate the total energy consumed over the complete training process. That number was converted into pounds of carbon dioxide equivalent based on the average energy mix in the US, which closely matches the energy mix used by Amazon’s AWS, the largest cloud services provider.

I encourage you to read the original research paper here. It’s quite heavy going but worth the effort. It sheds a light on the environmental impact of some of these world changing technologies, and before we run away with ourselves thinking that technological progress is always, usually… mostly… sometimes(?)…. very positive, it’s good to remind ourselves that there are always two sides to every story. Emerging technologies need to be guarded and protected but they also need to be reported and regulated clearly and transparently. And even the most progressive technology companies, especially the most progressive technology companies, need to be aware of the impact of their work and behave in an environmentally responsible manner.

- If you want to learn more about the progress of AI, the Electronic Frontier Foundation, a brilliant non-profit organisation who campaign for an open and transparent web have a great resource here covering many aspects of research in AI.